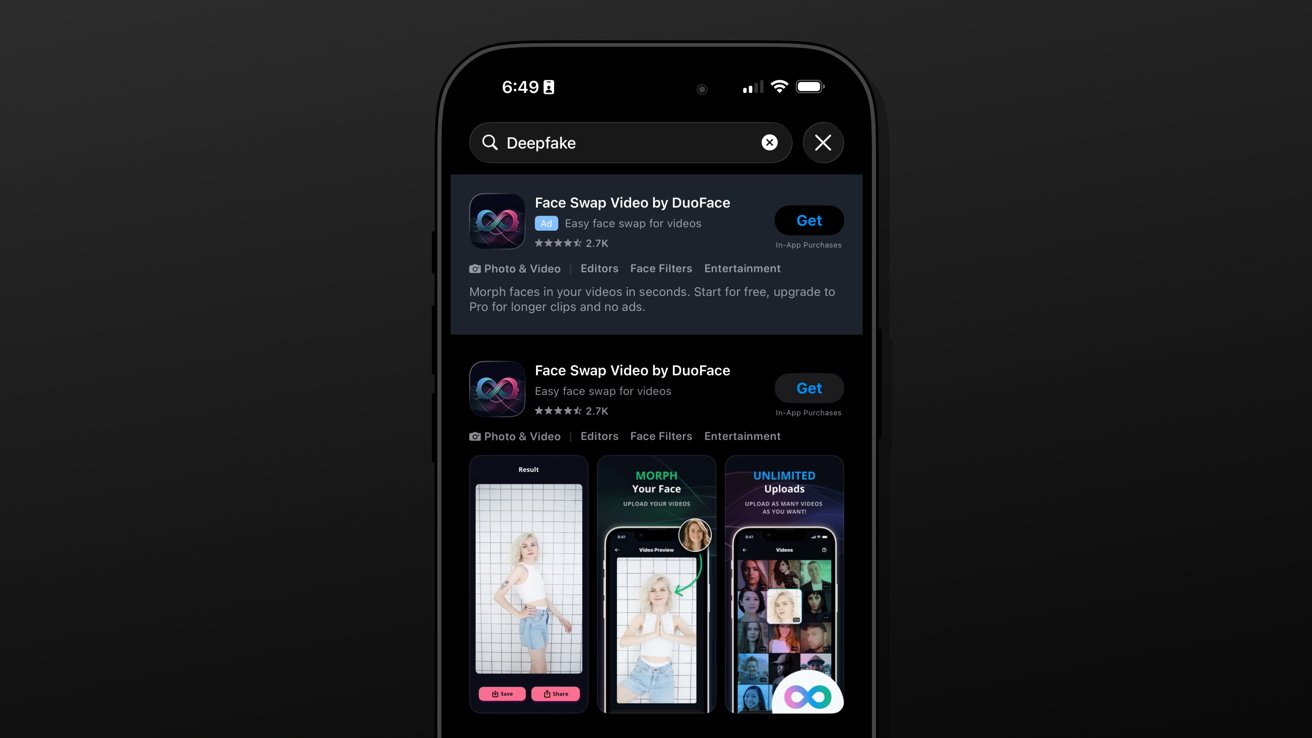

Deepfake nonconsensual porn apps are advertising in the App Store

AppleInsider reports that apps capable of creating nonconsensual deepfake sexual images and videos are still appearing in App Store search results, ads and suggestions, despite Apple having removed at least 28 similar apps in January. The article cites research from the Tech Transparency Project, which found that “nudify” apps were being promoted through App Store ads and search features.

The article says the problem is not only dedicated nudification apps. Some face swap or AI image apps may appear harmless, but can be used to put a real person’s face into explicit videos or create sexualized content without consent. AppleInsider tested one app and found no meaningful guardrails, warnings or blocks when using explicit material.

The Tech Transparency Project reportedly found 31 nudify apps that were rated as suitable for minors. AppleInsider argues this shows weaknesses in App Review, especially because some of these apps were not just available, but also monetized through ads and search placement.

Apple told AppleInsider that nudification apps are not allowed in the App Store, developers are contacted when violations are found, and apps may be removed if problems are not fixed. Apple also said 15 apps from the report had already been removed, six developers had been contacted, certain search terms had been blocked, and ads are not shown to users under 13 or allowed to contain adult content.

The article concludes that Apple still has a larger enforcement problem. Even after removals and blocked terms, some apps remain available and discoverable, showing that app descriptions and age ratings are not reliable enough when AI tools can be misused for sexual abuse.